Why AI’s Future Is Heterogeneous

Sprinting into the New Year

For those of us following the AI infrastructure world over the past year, it was eventful to say the least. In appropriate fashion, 2025 wrapped up with a $20B mega-deal between NVIDIA and Groq and SoftBank funding the rest of their $40B OpenAI investment to fuel the Stargate buildout. Then 2026 kicked off with Jensen unveiling the Vera Rubin promising multiples of efficiency gains and xAI committing $20B to a 3rd Colossus data center in Mississippi.

Compute is the lifeblood of AI progress. The leading players are sprinting at full speed to accelerate construction timelines and lock up precious supply of chips, energy, memory, and manufacturing capacity. Calculated moves and strategic deals are shaping how the next few years will pan out. Where is all of this taking us, you may ask? Based on conversations across model builders, hyperscalers, data centers, and semiconductor companies, here’s a glimpse into what we’re seeing.

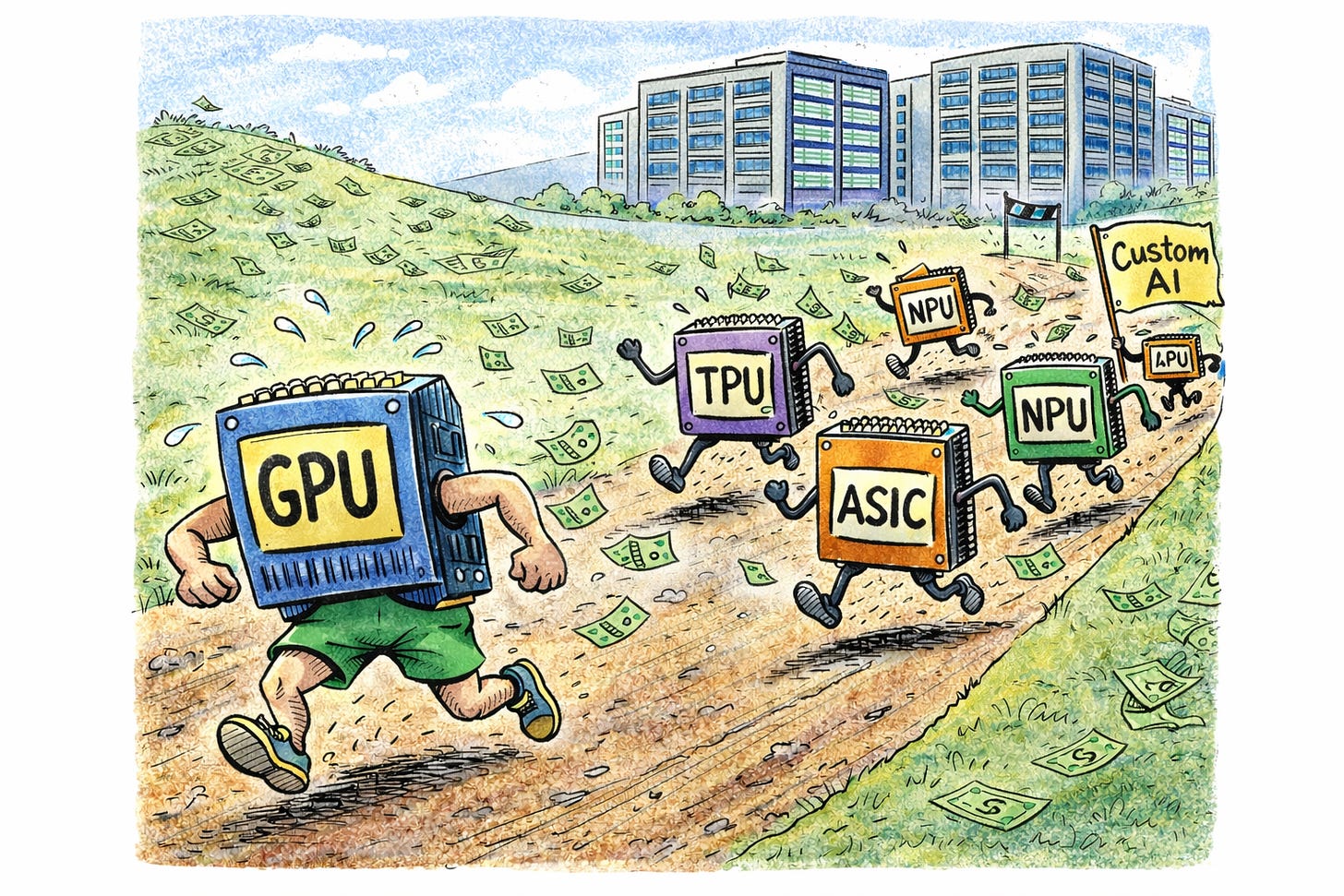

The punchline: we are moving to a world of heterogeneous compute. Heterogeneity isn’t just about performance; it’s about making AI economically viable at society-wide scale. Over the next few years we will see a diverse hardware stack emerge to power the wide-ranging collection of models and AI use cases.

Let’s imagine it is 2030.

The AI compute world has fractured into a mosaic of silicon specialties, stitched together into complete systems. The march of ever-increasing specialization has continued, with individual chips sacrificing flexibility for performance. As model architectures diversify and workloads fragment across pre-training, post-training, and inference, the assumption of a single optimal chip breaks down.

NVIDIA continues to dominate the landscape with their tightly integrated systems, but they are no longer the monopoly. Their acquihire and licensing deal with Groq spurred competitors into action and highlighted an important truth: even the most dominant general-purpose platforms need specialized silicon to cover the full AI lifecycle. Hyperscale silicon is thriving with Google TPUs and AWS Trainium/Inferentia leveraged internally with success and now sold to customers at volumes. Multi-sourcing chips has become a norm. There is now sufficient competitive pressure and credible alternatives to bring GPU gross margins back toward historical norms. Microsoft and Meta have made splashy acquisitions to invigorate their semiconductor efforts, preventing over-reliance on external vendors and driving improved internal economics. In-housed chip efforts at AI labs like OpenAI and xAI are bearing fruit. AMD, Qualcomm, and Intel are in market, offering openness and lower prices which meet a segment of market needs. A select group of AI semiconductor startups break out and are ramping volumes, carving out specialized wedges across low power, low latency, memory capacity, and dedicated model architectures.

Data centers evolve into multi-staged factories of compute. A pipeline of silicon systems serve different needs across the AI development lifecycle, creating an assembly line of intelligence, segmented across use cases. Dense training clusters with tightly networked, coherent GPUs remain the workhorse, but racks of inference-focused chips sit intermingled, as the disparate phases of model training interleave with advancements in reinforcement and continual learning. Operators increasingly co-design infrastructure with workload characteristics in mind, as every percentage point of increased utilization results in bottom line impact.

The global data center build out climbs towards 100 GW+. Massive clusters continue to make headlines and brownfield sites are repurposed with lagging-generation chips that fit the power envelope for inference close to users. The energy bottleneck continues to persist but grid scale renewables and innovative engineering keep the lights on. Yet progress isn’t linear as we see blips, construction delays, and underwater economics which cause certain vendors to fall off and markets to hold their breath.

Increased openness drives a new focus on connectivity as bottlenecks shift. Networking sees cost effective co-packaged optics come to market, supporting scale-up and scale-out needs, and allowing for ever-denser racks and multi-campus training. Hyperscalers lean into open source networking standards and challengers to Arista and Broadcom gain traction by rethinking network design in this AI-first world.

Heterogeneity doesn’t stop at the data center boundary. Compute also shifts to the physical world as humanoids, generalizable robotic systems, and AI-enabled wearables start to see adoption. Millions of units begin shipping and every action starts with the machine’s AI processor. Edge compute grows and represents a fundamentally different paradigm than data center racks. Every form factor necessitates its own delicate balance of power, communications, latency, and performance tradeoffs that will be enabled by a wide-ranging fleet of specialized chips.

However, all of this specialization isn’t free. This shift introduces real friction from fragmented toolchains, lack of portability, and increased complexity. A new software ecosystem emerges to help unify and optimize the increasingly diverse set of models across all of the potential hardware targets. Orchestration grows in criticality as heterogeneity rises.

Ultimately, the conversation shifts to total cost of ownership. Today’s models already have the capabilities to fuel meaningful GDP growth. Increasingly, the cost of compute is the bottleneck for unlocking new use cases and economic progress, pushing the industry towards heterogeneity.

So why all the investment today?

Working backward from this vision clarifies the flurry of strategic moves across the ecosystem. Acquisitions, deal making, and “king-making” are defensive and offensive at the same time. Companies are hedging against being left behind, while betting on the next wave of AI infrastructure. A more heterogeneous compute stack isn’t optional. It’s required for AI to scale economically and reach the breadth of use cases the real world demands. No single chip can serve that diversity. The winners won’t be those who build the fastest chip in isolation, but those who deliver complete systems that collapse complexity across silicon and software to solve real problems.

This shift won’t happen overnight. Chips take years to design and data centers take years to build. What we’re seeing is the earliest phase of a decade-long, multi-trillion-dollar buildout. Expect 2026 to bring more strategic moves, heavier capital deployment, and clearer signals that the AI ecosystem is broadening beyond a single stack.

Many of these ideas deserve deeper dives, but it feels fitting to start the year with the macro picture. There is still enormous engineering work ahead. If you’re building toward this heterogeneous future, or see a different path emerging, let’s talk.